What is SOTIF?

What is SOTIF? (Safety of the Intended Functionality) ISO/PAS 21448 The practical operation of automated vehicles in a real-world environment...

Unlock Engineering Insights: Explore Our Technical Articles Now!

Discover a Wealth of Knowledge – Browse Our eBooks, Whitepapers, and More!

Stay Informed and Inspired – View Our Webinars and Videos Today!

A 10-question assessment scores your organization across two critical dimensions

9 min read

Adam Saenz

:

Sep 13, 2022 10:21:16 AM

Adam Saenz

:

Sep 13, 2022 10:21:16 AM

In the 3rd blog in this series, “The SOTIF Scenarios”, we reviewed the four SOTIF scenarios: Known Safe, Known Unsafe, Unknown Safe, and Unknown Unsafe. In this article, we return to the four scenarios, but with a quality-oriented mindset, focusing on the why and how of defining and achieving SOTIF scenarios of high quality in a manner that is measurable and repeatable.

Safety Of The Intended Functionality (SOTIF) is defined in ISO 21448:2022, “Road vehicles — Safety of the intended functionality”, as the absence of unreasonable risk due to hazards resulting from functional insufficiencies of the intended functionality or by reasonably foreseeable misuse by persons. The SOTIF process is used to assess the functionality of a system under almost any circumstance (a scenario), and define its safe state regardless of whether:

Okay, that defines the “what”. But what about the “how” and “why”?

For this level of safety to be achieved, the system needs to be capable of making the correct decisions, every time, swiftly and reliably. For the system to make those decisions, it needs:

Let’s look at some of these considerations in further detail:

At its most fundamental level, driving is an exercise in data transfer, and it has been since the very first moments of the automobile. Even in its earliest crude infancy, the act of driving was a chaotic tossed salad of data transfer and feedback loops between the environment, the cars, and the humans driving them. It may have been analog, but it was still data.

Without data, the act of driving, in any form, simply could not have happened. Human brains provided both computational power and actuator control. The human senses served as the early system sensors, eventually augmented with gauges and other instrumentation. Drivers took the data the environment provided… visual cues, temperature, wind, rain, road conditions, light levels, smells, audio cues in the form of engine noises, and even the vibration transferred to the human through the seats, floorboards, pedals, and steering wheel… and applied logic derived from knowledge earned through firsthand experience and shared from outside sources.

The driver used all these elements to make supposedly sound decisions about how to drive the car. However, the real-world results were often mixed. Sometimes they failed spectacularly, but on average it was typically a better overall experience than the conveyances that came before, and it generally beat walking. It was an imperfect improvement.

What drove subsequent improvements, was the need for a better and safer driving experience. But what enabled those improvements was improved data: accurate, complete, and consistent, which in turn enabled the design of safer vehicle systems. The more consistent the data got, the clearer the picture became as to what needed to be improved and why, which led to figuring out how to improve those systems, which in turn enabled the improvement and refinement of the driving experience.

For example, the earliest crude vehicles had no instrumentation. On some, the earliest onboard instrumentation was the addition of a temperature gauge built into an optional aftermarket radiator cap installed way out there on the front of the car. With the development and gradual improvement of instrumentation and it’s relocation to inside the car on a clustered dashboard control panel, precise data became available to the driver in real-time. Hunches and guesses about temperatures, speeds, and engine parameters became known data points displayed on gauges with trustworthy numbers that humans could act upon with greater confidence.

Instrumentation became standardized, at least so far as to what kind of information a driver could expect to find on the dashboard and the meaning of those numbers; speed in M.P.H. means the same thing no matter the vehicle or its instrumentation layout. And the items measured by engineers and mechanics became more consistent as well. Techniques were developed to consistently measure the precise stopping distance of a vehicle at a given speed and weight, which in turn led to the development of vehicles that were more stable and could brake faster and more controllably. Brighter and more effective headlights were developed in part by creating the means to measure both their brightness and the shape of their beam in a consistent and repeatable setting. Increased visibility became a mathematical function of area and viewing angles that were measurable in the same consistent way regardless of the vehicle’s shape; in turn, this data gained greater influence over the vehicle’s cabin design and ergonomics, rather than the artistic design aesthetics alone having precedence.

This foundational point about the importance of data consistency is easily overlooked. But it is an important point that draws a bold thread linking the earliest days of automotive technology to the most modern mechatronic vehicle systems. Consistency in data is a key measure of the quality of the data. True then, true now.

There is real value today in remembering the importance of quality data. Without ensuring that your data is accurate and complete, without the adoption of common data types and the universal acceptance of the meaning of those numbers, without broad consistency across the automotive spectrum, and without a trustworthy level of proven reliability in the data, safe driving would have remained a distant dream. Instead, the quality of the driving experience was defined and measured with real numbers, performance could be measured, and improvements implemented. The fundamental building blocks for today’s quality digital data systems and all modern safety efforts were forged in the analog data crucible of the very beginning of the automotive realm.

As time went by, data accuracy improved with the increased sensitivity and ruggedness of improved instrumentation, but the data were still being processed in real-time, by humans. This data was the product of imperfect processing and subjective storage in the form of scribbled notes and fallible human memory. It was limited as much by the bandwidth constraints of the human brain as by the technology.

The shortcomings in these systems revealed the need to improve them beyond flawed human idiosyncrasies. Better sensors and instrumentation were developed to transcend the humans in order to better serve them. Today, the typical modern vehicle is equipped with sensors, actuators, and computational power thousands of times more advanced than those that helped the space program put men on the moon. The quantity and complexity of the electronics and data now being utilized, and the ability to utilize them with greater effectiveness, has necessitated the creation of safety standards like SOTIF.

Twenty years ago, a safety standard like SOTIF was not needed because there was no data used outside of human intervention. Today, SOTIF processes break a problem down accurately and completely into its base elements to the point that it can’t be broken down further, to define and help achieve truly safe states. The quality of the data remains an important factor, which is why sensors of different technologies are utilized together to help mitigate the shortcomings of using each type individually.

It doesn’t do much good to create high-quality SOTIF scenarios if your system can’t leverage them to perform useful work. The system needs to know what questions to ask, when to ask them, and what the correct answers are supposed to be. In a modern vehicle, this knowledge is programmed into the system and locked in for production.

To perform this computational work, the system needs a huge volume of relevant data to feed upon, in addition to clear definitions of safety. The data must be accurate, timely, and complete. The data serve as the fundamental building blocks for defining “what is”. But it also defines what something is supposed to be. The difference between what something actually is, and what it is supposed to be, is analyzed by the system to prioritize issues and determine whether that condition is, at that moment, safe.

Now that we have explored some of the historical context that has driven forward the automotive safety efforts of today, and we better understand why quality data is important in creating SOTIF scenarios, we can explore what actually constitutes quality in the SOTIF scenarios themselves. Simplified, these considerations include:

An examination of some of the key elements of the SOTIF standard help to reinforce these points.

In ISO 21448:2022, Section 4.2.1, the SOTIF standard states:

“The main objective of this document is to describe the activities and rationale used to ensure that the risk level associated with all identified SOTIF-related hazardous events is sufficiently low.

“The function, system specification and design include relevant use cases which, in turn, comprises several scenarios. These scenarios could contain triggering conditions that lead to harm… In order to avoid the harm, proper situational awareness is necessary.”

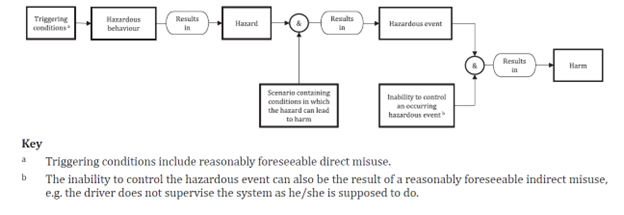

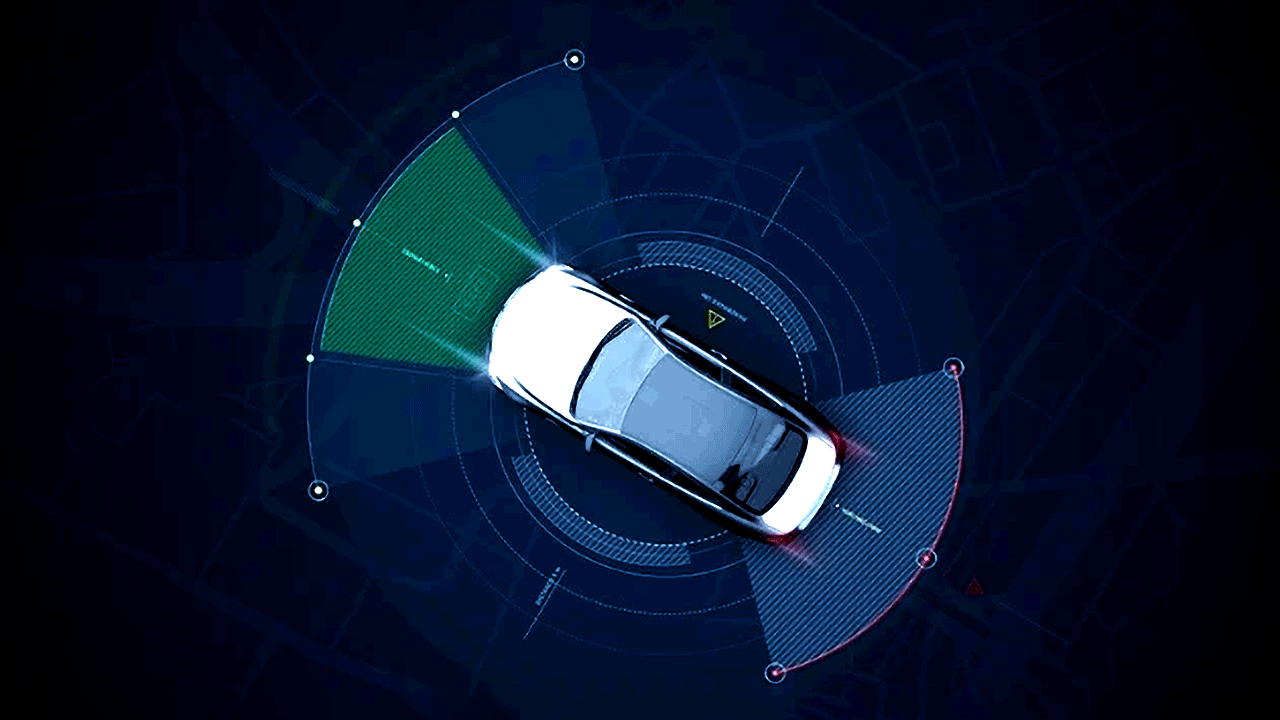

Figure 4 — Visualisation of a SOTIF-related hazardous event model

Further study of this flowchart underscores the cause-and-effect nature of hazardous events. The causes and ramifications of hazardous events can vary wildly, but their structure is consistent. And it is in that consistency, that we find the first stable handle that we can grab onto in order to wring logic out of the event chaos.

A key takeaway from this model is also the importance that situational awareness plays in avoiding harm. This ties right back to the quality of the incoming data that we explored in the first half of this article. It is not enough to be aware; you must also be accurately aware.

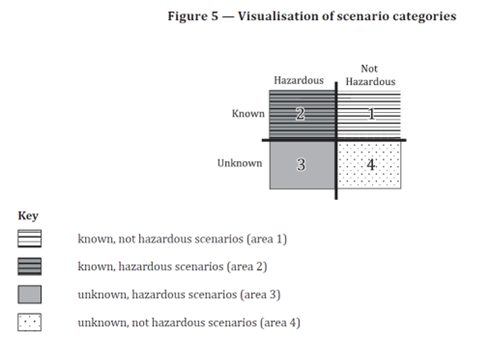

In ISO 21448:2022, Section 4.2.2, the four types of scenarios are classified, as illustrated in Fig. 5:

The various approaches to, and nuances of, the evaluation of the four scenario categories, are covered in detail in the standard. But there are some key quality-related points worth revisiting:

Statistics-based testing plays a key role in keeping the SOTIF process practical. Remember, the ultimate goal of the SOTIF activities is to evaluate the potentially hazardous behavior present in areas 2 and 3 (the Hazardous areas) and to provide an argument that the residual risk caused by these scenarios is sufficiently low, i.e. at or below the acceptance criteria. For example, while the risk resulting from the known scenarios in area 2 (Known and Hazardous) must be explicitly evaluated, the risk resulting from unknown scenarios in area 3 (Unknown and Hazardous) has been determined by statistics-based testing to be sufficiently small. This helps to prevent the unnecessary expenditure of resources and effort.

In order to develop a safe vehicle, rigorous engineering and quality management processes, applied with discipline and consistency, are essential.

It is important to note here that the causes of hazardous behavior from the intended functionality are not considered, only their consequences for safety.

Afterward, corresponding verification and validation test cases can be derived to evaluate if the resulting risk is sufficiently small.

High-quality SOTIF scenarios cannot be defined if the overall team is in disarray. As stated in ISO 21448:2022, Section 4.2.2,

“In case of a distributed product development, a development interface agreement (DIA) is defined between all involved parties. The goal of the DIA is to confirm, in the early stages of a project, all responsibilities of the SOTIF activities and that adequate technical information will be exchanged between the development parties.”

At this point, it is important for the DIA to be tailored to meet the unique requirements, capabilities, and expectations of the business entities involved. The process for doing so is detailed in the standard, but the importance of this work cannot be overstated. This is the document that will drive and shape all subsequent SOTIF work on the product, both figuratively, literally, and legally. A lot of time, effort, and resources will be allotted and predicated on what is detailed therein. Clarity and completeness, qualities whose importance has already been underscored elsewhere in this document, are especially important here.

The automotive industry in general is still trying to wrap its arms around the challenge of finding the optimal way of integrating SOTIF organically into the design process from the start. The standards certainly help, but the task of integrating SOTIF can be eased somewhat by reinforcing the importance of quality data. The automotive realm has seen the consequences of allowing quality to slip. Quality data acted upon with discipline is the foundation upon which SOTIF success is built.

What is SOTIF(Safety of the Intended Functionality) ISO/PAS 21448

What is SOTIF? (Safety of the Intended Functionality) ISO/PAS 21448 The practical operation of automated vehicles in a real-world environment...

How Does SOTIF Address Safety Design Vulnerabilities?

What is the scope of SOTIF? An Acceptable Level of Safety TheSafety Of The Intended Functionality or SOTIF conveys a specific view on how...

What are the key considerations for SOTIF- related software development? In this 6th article in our SOTIF series, we turn our focus to the human and...

ADAS Verification and Validation