What is ASPICE in Automotive?

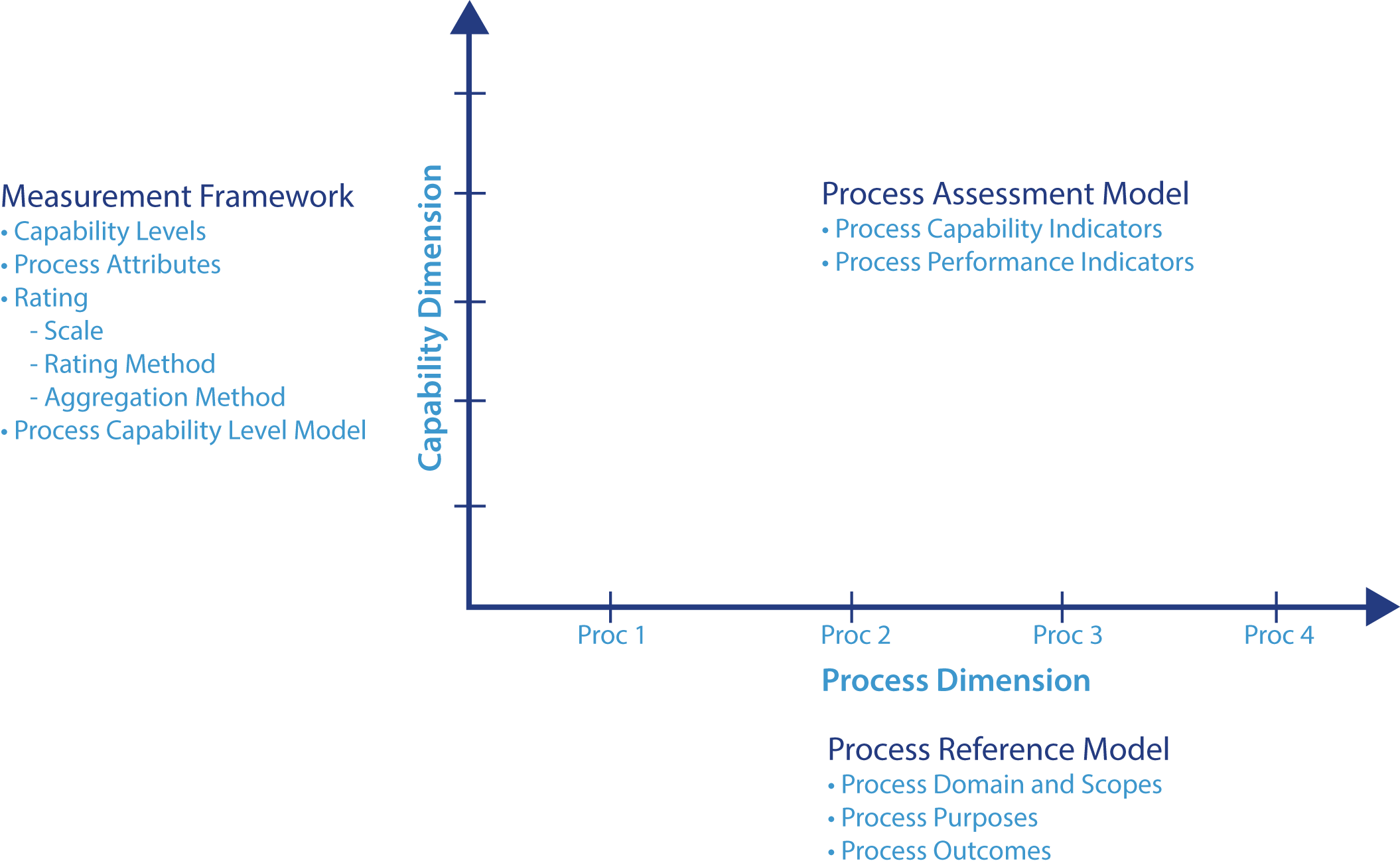

What is ASPICE? Automotive Software Performance Improvement and Capability Determination, or ASPICE, is a standard that provides a framework for...

Unlock Engineering Insights: Explore Our Technical Articles Now!

Discover a Wealth of Knowledge – Browse Our eBooks, Whitepapers, and More!

Stay Informed and Inspired – View Our Webinars and Videos Today!

A 10-question assessment scores your organization across two critical dimensions

4 min read

Marty Muse

:

Apr 30, 2026 9:16:45 AM

Marty Muse

:

Apr 30, 2026 9:16:45 AM

Most model-based systems engineering (MBSE) programs do not survive their first compliance audit. The tools are licensed. The team has been trained. The digital thread looks credible in a demo. Then an ISO 26262 or ASPICE assessor pulls a single requirement at ASIL B or above and asks a question the team cannot answer in minutes: show me every artifact downstream of this requirement, and show me the verification evidence that closes the loop. The room goes quiet. That silence is what an MBSE audit measures.

Two regulatory shifts have raised the bar in the last few years. ASPICE 4.0, sharpened base practice expectations on bidirectional traceability between SYS, SWE, and supporting processes, and assessors have grown disinclined to accept indirect or claim-based evidence. ISO 26262 Part 8, the supporting-process clause governing configuration management and change management, has been interpreted more strictly in the wake of field failures traced to documentation gaps. AI-generated requirements, design artifacts, and verification scripts compound the pressure: they multiply the volume of artifacts in the digital thread without automatically extending the trace links that hold the thread together.

The consequence for engineering leaders is concrete. A program that breezed its assessment a few years ago can fail an equivalent assessment today on the same artifacts, because the assessor's bar has moved. The cost is time. Re-work cycles add weeks to months to a certification timeline. On a Start-of-Production-bound program, six weeks of safety-case rework can cost a launch window.

An MBSE audit does not measure tool selection. It does not measure the model's size. It does not measure how much training the team has had. Those are inputs.

The audit measures one thing: whether, on demand, the team can trace any one element of the digital thread to every other element that depends on it or supports it. Bidirectional. In minutes, not days. Against any baseline date, the assessor names.

That single capability is what digital-thread maturity means in practice, and it is what assessors are trained to test. Five dimensions structure the test:

1. Requirement-to-architecture traceability. Every system requirement links to the architecture element or elements that realize it.

2. Architecture-to-design coverage. Every architecture element decomposes into one or more design artifacts, with no orphans on either end.

3. Design-to-verification coverage. Every safety-critical design element in the model has at least one verification artifact.

4. Bidirectional change propagation. A requirement change automatically triggers a documented review of all downstream architecture, design, and verification artifacts.

5. Configuration baseline integrity. The team can recreate the exact state of the digital thread at any baseline date.

Dimensions 1 and 2 are typically healthy in programs that have invested in MBSE at all. The up-front modeling effort handles them. The pattern in audit findings is more specific: most failures cluster around dimensions 3, 4, and 5.

The auditor picks any verification artifact and asks: what design element does this verify, what requirement does that design element satisfy, and what was the original hazard analysis output that produced that requirement? On a healthy program, the team walks the chain in two or three minutes inside the model. On most programs, tests live in a spreadsheet that groups them against features rather than design elements, coverage is reported as a percentage with no traceable foundation, and the chain breaks at the first transition. The fix is not more tests. The fix is binding verification artifacts to the model rather than to a parallel verification system, which usually means a tool integration project sized at three to six engineering weeks.

The auditor walks the team through the last requirement change at ASIL B or higher and asks where the documented impact analysis lives. On a healthy program, impact analysis is an artifact, generated when the change is reviewed and stored against the change request. On most programs, change requests live in Jira, model changes occur in the modeling tool, and downstream verification reviews (when they occur) take place in spreadsheets. Three systems, no integration, individual engineers remembering. Six months into a program, somebody always forgets. The fix is enforcement before integration: a requirement change must produce a documented impact analysis before it closes, even if that analysis is initially manual.

The auditor asks the team to show the digital thread state at the SOP-3 milestone, then the current state, and then the change requests that account for the differences. On a healthy program, baselines tag a coordinated set of versions across tools, and recreating any baseline takes minutes. On most programs, baselines live in Jira labels, on shared drives, and in tool-specific repositories. Recreating the SOP-3 state requires manual reconstruction with judgment calls at every step about what was baselined and what was in flight. The fix is software configuration management discipline applied across MBSE tools, which requires either a configuration management tool spanning the toolchain or a manual baseline ritual owned and enforced by engineering leadership.

LHP runs the MBSE Audit as a four-week engagement. Week one is discovery and tool inventory. Week two samples the digital thread across all five dimensions, with greater weight on higher-ASIL elements. Week three converts findings into a prioritized remediation roadmap. Week four delivers the executive briefing and a capability-transfer session for the MBSE team. The methodology is tool-agnostic across Cameo Systems Modeler, Capella, IBM Rhapsody, Polarion, Jama Connect, and IBM DOORS. LHP returns six months after the engagement for a one-week re-audit that measures progress against the original baseline.

The decisive factor in a model-based systems engineering audit is not technological. The five dimensions, the structure, and the re-audit are tools. The work is leadership: deciding that audit-credible traceability is a program priority, naming an owner, and treating MBSE health as a leadership-cadence topic rather than an audit-cadence topic. The programs that survive their first audit do so because their engineering leaders made that decision twelve months earlier. The programs that do not are still discoverable in 2026; the difference is the cost of finding out.

Since 2001, LHP Engineering Solutions has helped companies deliver technology that must perform as intended, every time. Our clients operate in safety-critical, operation-critical, and mission-critical environments such as on-highway, off-highway, aerospace, defense, and oil & gas, where failure is not an option, and delays cost market share.

LHP helps organizations design, architect, validate, and monitor complex systems. Our global team of engineers supports the development of advanced technologies, including high-voltage power electronics, hybrid and electric powertrain controls, connectivity, and ADAS platforms, enabling OEMs and Tier-1 suppliers to bring next-generation products to market quickly and with confidence.

In compliance-driven industries, LHP uses our model-based systems engineering (MBSE) approach, enhanced by AI, to help companies move quickly while meeting rigorous standards, including functional safety, ASPICE, and cybersecurity. Our teams have helped global technology companies achieve functional safety certification and ASPICE compliance in months rather than years, and established enterprise-grade safety and cybersecurity management systems for leading OEMs.

When organizations must make major technology leaps, such as launching next-generation platforms, future-proofing vehicle architectures, or proving new concepts to secure market-defining programs, LHP delivers the engineering disciplines, solutions, and on-time execution required to succeed.

Because in industries where technology must perform as intended, precision engineering matters.

What is ASPICE? Automotive Software Performance Improvement and Capability Determination, or ASPICE, is a standard that provides a framework for...

How is Automation through AI redefining efficiency and productivity in Engineering? Artificial Intelligence is no longer an experiment or a future...

ADAS Verification and Validation

Why is Safety at the Core of Software-Defined Vehicles? Creating technology can be a complicated and time-consuming process. At LHP Engineering...

How to Design Safety Into ADAS Products